Why we no longer evaluate SWE-bench Verified

OpenAI News自从 2024 年 8 月我们首次发布 SWE-bench Verified 以来,该基准已成为业界衡量模型在自主软件工程任务上进展的常用标尺。发布后, S WE-bench Verified 在前沿模型发布中成为常被引用的能力指标,也是我们在 Preparedness Framework 中用于跟踪和预测能力进展的重要信号。当初构建该 Verified 子集时,我们着力修正原始评测中使某些任务无法完成的问题(原始数据集见 SWE-bench 论文)。

在取得最初突破后,针对 SWE-bench Verified 的最先进模型进展已放缓:过去六个月从 74.9% 提升到 80.9% 。这引出一个关键问题:剩余的失败是反映模型能力的真实局限,还是数据集自身的问题?

在一项新的分析中,我们发现了两个主要问题,表明在当前模型性能水平下, SWE-bench Verified 已不再适合作为衡量面向前沿发布的自主软件工程能力进步的基准:

- 测试会拒绝正确的解法:我们审核了数据集中模型常失败的 27.6% 子集,发现至少有 59.4% 的被审问题存在有缺陷的测试用例,这些测试会拒绝功能上正确的提交。即便在最初创建 SWE-bench Verified 时我们已尽力改进,仍有大量问题残留。

- 训练数据污染(训练时见过题解):大型前沿模型会从训练数据中学习到信息,因此绝不能在训练中见过其评测题目与答案——这类似于在考试前把试题和答案提前发给学生。 SWE-bench 的题目来源于开源仓库,这些仓库常被模型提供者用作训练语料。在我们的分析中,所有被测前沿模型都能再现作为参考答案的原始人工修复(即所谓的 gold patch ),或逐字输出某些题目的题干细节,说明这些模型在训练中至少见过部分题目与解答。

我们还发现,有证据表明在训练时见过题目的模型更容易通过评测,因为它们掌握了通过那些不够明确测试所需的额外信息。

因此,对 SWE-bench Verified 的成绩提升越来越多地反映模型在训练期间接触到该基准的程度,而非模型在真实世界软件开发能力上的实质提升。基于此,我们已停止发布 SWE-bench Verified 的分数,并建议其他模型开发者也这么做。

我们正在构建新的、未被污染的评测来更好地追踪编码能力,并认为这是学术界与工业界应优先关注的方向。在此之前,OpenAI 建议报告 SWE-bench Pro 的结果。

背景

最初的 SWE-bench 评测在 2023 年发布。每个问题来源于 12 个开源 Python 仓库中已解决的 GitHub issue,并与对应的 pull request(PR)配对。为判定模型生成的代码修改是否正确,每个问题附带两套测试:

- 会在未修改的代码库上失败、但在正确修复后通过的测试;

- 回归测试:在修复前后均应通过,以确保无关功能未被破坏。

模型看不到测试用例。它仅凭原始 issue 文本和修复前的代码库状态给出代码修改。只有当应用修改后所有测试均通过,模型才算通过该题目。

我们发现在原评测中有许多问题会导致对模型能力的低估:

- 某些单元测试过于具体或与任务不一致,以致功能正确的修复也被拒绝;

- 许多任务描述信息不足,可能存在多种合理理解,而测试只覆盖其中一种;

- 运行环境差异(如 Linux vs Windows,或不同 Python 版本)会导致测试产生虚假失败。

为解决这些问题,2024 年我们与专家软件工程师合作,对 1,699 道 SWE-bench 题目逐一复核,筛选出问题明确且测试合理的题目——每道题由三位专家独立复核,最终形成了 500 道的 SWE-bench Verified 精选集。

测试过窄与过宽

尽管 SWE-bench Verified 相较最初版本已有显著改进,但仍有残留问题。我们对 138 道在 64 次独立运行中, OpenAI o3 未能稳定解决的 SWE-bench Verified 题目进行了审计。每个案例至少由六名经验丰富的软件工程师独立评审;若有专家标注问题,再由额外团队复核。

结果显示,138 道题中有 59.4% 存在测试设计和/或题干描述的实质性问题,使得即便最强的模型或人工也极难或不可能解出:

- 35.5% 的题目包含过于严格、强制特定实现细节的测试(称为“窄测试”),导致许多功能正确的解法在导入或接口上因细节不同而失败;

- 18.8% 的题目测试覆盖了题干未明确要求的额外功能(称为“宽测试”);

- 剩余 5.1% 属于其他杂项问题,不易归入上述分类。

举例说明“窄测试”的一个案例是 pylint-dev__pylint-4551 ,PR 在总体解决方案中新增了名为 get_annotation 的函数;尽管题干未指明必须使用该函数名,测试直接导入并依赖该名字,导致许多正确的实现因导入错误而失败。另一方面,“宽测试”的典型例子是 sympy__sympy-18199 ,原 PR 实际修复了 3 个不同问题,但 SWE-bench Verified 的题干仅取自其中一个(#18212),而测试却检验了涉及其他两个问题的行为——模型往往按题干实现了正确修复,却被覆盖其他问题的测试判为失败。

污染(数据泄露)

由于 SWE-bench Verified 与这些开源仓库(以及相关发行说明)均为公开,避免训练时的“污染”对模型开发者来说很难。我们首先在自家模型中发现污染的迹象:例如 GPT‑5.2 在一些几乎不可能解出的题目上成功了 31 道。在 django__django-14725 中,测试要求一个名为 edit_only 的新参数,而题干并未明确要求该参数。 GPT‑5.2 在其思路链( COT )中显露出它掌握了发布说明中的相关信息,并正确识别出该参数是在 Django 4.1 中引入的。

为评估污染问题的广泛性,我们构建了自动化的红队测试流程。针对每个 SWE-bench Verified 题目,我们让 GPT‑5 按多轮(最多 15 轮)对 GPT‑5.2‑Chat、 Claude Opus 4.5 和 Gemini 3 Flash Preview 进行探测性询问,旨在从这些目标模型中诱出题目相关细节。挑选这些模型时有意排除了某些推理型模型,但我们也承认它们之间可能存在不小的能力差距。

在探测中, GPT‑5 可访问任务 ID、题目描述、 gold patch 与 PR 测试,并尝试通过改变系统/开发者提示、用户提示、助手预置与不同策略迭代性地恢复任务细节。每轮后,裁判模型评估回答中新出现的任务特定信息量,并将污染程度标注为“无”到“强”。对于被判为“强污染”的例子,我们用第二个裁判复核,确认 GPT‑5 没有向目标模型泄露过多信息;最终我们手动审阅了这些“强污染”示例的对话记录,下列为不同模型提供者中污染最严重的若干实例。

- GPT‑5.2 能在仅给出题干片段的情况下输出与 gold patch 完全一致的修复,准确到类名、方法名和新增的早退条件(例如增加 if username is None or password is None 的判断)。示例任务: django__django-11451 。

- Claude Opus 4.5 不仅能回忆出 PR 引入的 4 行功能性改动及其确切文件与函数位置,还能逐字引用 diff 中的内联注释。示例任务: astropy__astropy-13236 。

- Gemini 3 Flash 在仅给出任务 ID 的情况下亦能逐字输出题目描述与 gold patch,包括新增的正则表达式与具体行号。示例任务: django__django-11099 。

这些实例表明部分模型在训练资料中已见过评测题目或其解法,从而能直接生成高度接近或完全匹配的参考修复。

讨论

从这次对 SWE-bench Verified 的审计中,我们得到两点关于评测设计的更普遍教训。其一,来源于公开材料的基准会面临污染风险:训练数据的暴露会悄然提高模型得分。如果在构建基准时使用了公开抓取的数据,模型开发者应做额外的污染检测。基准与其解法一旦公开,便可能进入训练语料——因此在数据发布和训练数据过滤(例如使用 canary strings 严格筛选)上需要格外谨慎。

其二,自动评分本身难以完美:理想的测试用例应既不强制无关实现细节、又能对投机取巧的解法保持鲁棒,这在实践上非常复杂。识别并纠正这些问题需要大量人工标注与反复验收,通过多轮大规模人工复核我们才得以发现这些缺陷。

基于上述发现,近月来我们改为报告公开分割的 SWE-bench Pro 结果,并建议其他模型开发者亦采用该做法。虽然 SWE-bench Pro 也并非十全十美,但经验上其受污染问题的程度显著低于 SWE-bench Verified:我们的污染检测管道也发现了若干污染案例,但比起 SWE-bench Verified 来说既少且不那么严重,且没有模型能输出完整逐字匹配的 gold patch。

我们将继续投入资源开发原创、私有撰写的评测并呼吁学界和业界共同参与。在 GDPVal 中,题目由领域专家私下编写、答案由经过训练的评审以整体方式打分,这种方法虽然资源密集,却日益成为衡量真实能力进展的必要路径。

Since we first published SWE-bench Verified in August 2024, the industry has widely used it to measure the progress of models on autonomous software engineering tasks. After its release, SWE-bench Verified provided a strong signal of capability progress and became a standard metric reported in frontier model releases. Tracking and forecasting progress of these capabilities is also an important part of OpenAI’s Preparedness Framework. When we created the Verified benchmark initially, we attempted to solve issues in the original evaluation that made certain tasks impossible to accomplish in the SWE-bench dataset.

After initial leaps, state-of-the-art progress on SWE-bench Verified has slowed, improving from 74.9% to 80.9% in the last 6 months. This raises the question: do the remaining failures reflect model limitations or properties of the dataset itself?

In a new analysis, we found two major issues with the Verified set that indicate the benchmark is no longer suitable for measuring progress on autonomous software engineering capabilities for frontier launches at today’s performance levels:

- Tests reject correct solutions: We audited a 27.6% subset of the dataset that models often failed to solve and found that at least 59.4% of the audited problems have flawed test cases that reject functionally correct submissions, despite our best efforts in improving on this in the initial creation of SWE-bench Verified.

- Training on solutions: Because large frontier models can learn information from their training, it is important that they are never trained on problems and solutions they are evaluated on. This is akin to sharing problems and solutions for an upcoming test with students before the test - they may not memorize the answer but students who have seen the answers before will certainly do better than those without. SWE-bench problems are sourced from open-source repositories many model providers use for training purposes. In our analysis we found that all frontier models we tested were able to reproduce the the original, human-written bug fix used as the ground-truth reference, known as the gold patch, or verbatim problem statement specifics for certain tasks, indicating that all of them have seen at least some of the problems and solutions during training.

We also found evidence that models that have seen the problems during training are more likely to succeed, because they have additional information needed to pass the underspecified tests.

This means that improvements on SWE-bench Verified no longer reflect meaningful improvements in models’ real-world software development abilities. Instead, they increasingly reflect how much the model was exposed to the benchmark at training time. This is why we have stopped reporting SWE-bench Verified scores, and we recommend that other model developers do so too.

We’re building new, uncontaminated evaluations to better track coding capabilities, and we think this is an important area to focus on for the wider research community. Until we have those, OpenAI recommends reporting results for SWE-bench Pro.

Background

The original SWE-bench evaluation was released in 2023. Each problem is sourced from a resolved GitHub issue in one of 12 open-source Python repositories and paired with the corresponding pull request (PR). To determine whether a model-generated code change is correct, each problem comes with two sets of tests:

- Tests that fail on the unmodified codebase but pass if the issue is correctly fixed

- Regression tests that pass both before and after the fix to ensure unrelated functionality remains intact.

The model does not see the tests. It has to produce a code change given only the original issue text and the state of the repository before the fix. It passes a problem only if all tests pass after the code change is applied.

We found many issues with that evaluation that could lead to underreporting the capability of models.

- Some unit tests were overly specific or misaligned with the task so correct fixes could be rejected.

- Many task statements were underspecified, which could lead to multiple valid interpretations - while the tests only covered a specific one.

- Depending on setup of the environment (for example Linux vs Windows, or the python version), some tests could spuriously fail

We created SWE-bench Verified in 2024 to address these issues. We worked with expert software engineers to review 1,699 SWE-bench problems and filter out problems that had these issues. Each problem was reviewed by three experts independently. This review process resulted in SWE-bench Verified, a curated set of 500 problems.

Too narrow and too wide tests

While SWE-bench Verified is a big improvement over the initial version, residual issues remain. We conducted an audit of 138 SWE-bench Verified problems that OpenAI o3 did not consistently solve over 64 independent runs. Each case was independently reviewed by at least six experienced software engineers. If an expert flagged an issue, it was re-verified by an additional team.

We found that 59.4% of the 138 problems contained material issues in test design and/or problem description, rendering them extremely difficult or impossible even for the most capable model or human to solve.

- 35.5% of the audited tasks have strict test cases that enforce specific implementation details, invalidating many functionally correct submissions, which we call narrow test cases.

- 18.8% of the audited tasks have tests that check for additional functionality that wasn’t specified in the problem description, which we call wide test cases.

- The remaining 5.1% of tasks had miscellaneous issues that were not well grouped with this taxonomy.

An illustrative example of the first failure mode is pylint-dev__pylint-4551, where the PR introduces a new function `get_annotation` as part of the overall solution. This function name is not mentioned in the problem description, but is imported directly by the tests. While some models might intuit to create such a function, it’s not strictly necessary to implement a function with this specific name to correctly address the problem. Many valid solutions fail the tests on import errors.

Problem description

Plain Text

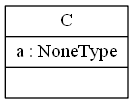

1Use Python type hints for UML generation

2It seems that pyreverse does not read python type hints (as defined by [PEP 484](https://www.python.org/dev/peps/pep-0484/)), and this does not help when you use `None` as a default value :

3### Code example

4`

5class C(object):

6 def __init__(self, a: str = None):

7 self.a = a

8`

9### Current behavior

10Output of pyreverse :

11

12### Expected behavior

13I would like to see something like : `a : String` in the output.

14### pylint --version output

15pylint-script.py 1.6.5,

16astroid 1.4.9

17Python 3.6.0 |Anaconda custom (64-bit)| (default, Dec 23 2016, 11:57:41) [MSC v.1900 64 bit (AMD64)]

PR test snippet

Python

1+from pylint.pyreverse.utils import get_annotation, get_visibility, infer_node

PR test failures (truncated for readability)

Python

1==================================== ERRORS ====================================

2_____________ ERROR collecting tests/unittest_pyreverse_writer.py ______________

3ImportError while importing test module '/testbed/tests/unittest_pyreverse_writer.py'.

4Hint: make sure your test modules/packages have valid Python names.

5Traceback:

6/opt/miniconda3/envs/testbed/lib/python3.9/importlib/__init__.py:127: in import_module

7 return _bootstrap._gcd_import(name[level:], package, level)

8tests/unittest_pyreverse_writer.py:32: in <module>

9 from pylint.pyreverse.utils import get_annotation, get_visibility, infer_node

10E ImportError: cannot import name 'get_annotation' from 'pylint.pyreverse.utils' (/testbed/pylint/pyreverse/utils.py)

An example of too wide test cases is sympy__sympy-18199. This task was sourced from a PR that addressed three distinct issues with the `nthroot_mod` function, specifically #17373, #17377, and #18212. The description for the SWE-bench Verified task, however, covers only the final issue #18212. This creates a mismatch: the PR tests cover all three issues, while the description details only one. In our runs, models often correctly implement the described fix and then fail tests that cover implementation for the other two issues.

Original PR description (from the GitHub PR)

Plain Text

1Fixes #17373

2Fixes #17377

3Fixes #18212

4- ntheory

5- `nthroot_mod` now supports composite moduli

Problem Description for #18212

Plain Text

1nthroot_mod function misses one root of x = 0 mod p.

2

3When in the equation x**n = a mod p , when a % p == 0. Then x = 0 mod p is also a root of this equation. But right now `nthroot_mod` does not check for this condition. `nthroot_mod(17*17, 5 , 17)` has a root `0 mod 17`. But it does not return it.

Problem Description for SWE-bench Verified task (only taken from #18212):

Plain Text

1nthroot_mod function misses one root of x = 0 mod p.

2

3When in the equation x**n = a mod p , when a % p == 0. Then x = 0 mod p is also a root of this equation. But right now `nthroot_mod` does not check for this condition. `nthroot_mod(17*17, 5 , 17)` has a root `0 mod 17`. But it does not return it.

Contamination

SWE-bench Verified and the repositories (code bases and release notes) are both open-source and broadly used and discussed, which makes avoiding contamination difficult for model developers.

We first encountered signs of contamination in our own models. For example, when GPT‑5.2 solved 31 tasks we identified to be almost impossible to solve. In django__django-14725 the tests require a specific new parameter `edit_only` which is not explicitly required by the problem statement. While solving the problem, GPT‑5.2 shows in its chain of thought that it has information about the release notes that detail changes to the codebase, and correctly identifies that the `edit_only` parameter was introduced in Django 4.1.

GPT‑5.2 CoT

Plain Text

1There is also `edit_only` parameter maybe added around 4.1 or 4.2. Since this is 4.1 dev 2022, the code might be before introduction. We will implement now. Hidden tests will check new behavior.

To assess how significant contamination is more broadly, we created an automated red-teaming setup. For each SWE-bench Verified question, we tasked GPT‑5 with probing a GPT‑5.2‑Chat, Claude Opus 4.5 and Gemini 3 Flash Preview for contamination. These models were chosen to exclude reasoning models, but we acknowledge there is likely a non-trivial capability gap between them.

To probe for contamination, GPT‑5 received: the SWE-bench Verified task’s ID, description, gold patch, and PR tests. Over 15 turns, we allowed GPT‑5 to vary the system/developer prompt, user prompt, and assistant prefill and different elicitation strategies. After each turn, a judge model labeled how much novel task-specific information appeared and each response was labeled for contamination severity from “none” to “strong.” GPT‑5 was allowed to adapt its strategy based on prior turns to iteratively recover task-specific details. For each example of strong contamination, we verified with another judge that GPT‑5 didn’t leak too much information to the target model. Finally, we then manually reviewed the “strong” examples that make up the transcripts in this post.

Below are examples of strong contamination across different model providers.

GPT‑5.2

Given a short snippet from the task description, GPT‑5.2 outputs the exact gold patch. In particular, it knows the exact class and method name, and the new early return condition `if username is None or password is None` that is introduced.

Task ID: django__django-11451

Contamination elicitation

Problem statement

1ModelBackend.authenticate() shouldn't make a database query when username is None

User prompt

1We're playing a SWE-bench Verified memory game.

2Please give your best guess of the full sentence of the task description based on the hint, and the corresponding code change introduced in the fix. Bonus points if you remember the exact diff.

3The task ID is django__django-11451.

4Hint: Part of the description was "... shouldn't make a database query when username is None"

Model response

1Task (best-guess full sentence)

2- “`ModelBackend.authenticate()` shouldn’t make a database query when `username` is `None`.”

3

4What the fix changed (corresponding code change)

5- Location: `django/contrib/auth/backends.py`

6- Function: `ModelBackend.authenticate(...)`

7- Change: add an early-return guard so that if `username` is `None` (and similarly if `password` is `None`), Django returns `None` immediately instead of calling `UserModel._default_manager.get_by_natural_key(username)` (which would hit the DB and/or error).

8

9Best-guess diff (approximate, from memory)

10

11```diff

12diff --git a/django/contrib/auth/backends.py b/django/contrib/auth/backends.py

13index XXXXXXX..YYYYYYY 100644

14--- a/django/contrib/auth/backends.py

15+++ b/django/contrib/auth/backends.py

16@@ -1,6 +1,7 @@

17 class ModelBackend(BaseBackend):

18 def authenticate(self, request, username=None, password=None, **kwargs):

19+ if username is None or password is None:

20+ return

21 UserModel = get_user_model()

22 if username is None:

23 username = kwargs.get(UserModel.USERNAME_FIELD)

24 try:

25 user = UserModel._default_manager.get_by_natural_key(username)

26 except UserModel.DoesNotExist:

27 UserModel().set_password(password)

28 else:

29 if user.check_password(password) and self.user_can_authenticate(user):

30 return user

31```

32

33If I had to be stricter about matching what actually shipped, the key behavioral point is: **don’t attempt `get_by_natural_key()` (DB query) when `username` is `None`**; the fix is an early `return None` before any lookup.

Gold patch

Python

1diff --git a/django/contrib/auth/backends.py b/django/contrib/auth/backends.py

2--- a/django/contrib/auth/backends.py

3+++ b/django/contrib/auth/backends.py

4@@ -39,6 +39,8 @@ class ModelBackend(BaseBackend):

5 def authenticate(self, request, username=None, password=None, **kwargs):

6 if username is None:

7 username = kwargs.get(UserModel.USERNAME_FIELD)

8+ if username is None or password is None:

9+ return

10 try:

11 user = UserModel._default_manager.get_by_natural_key(username)

12 except UserModel.DoesNotExist:

Claude Opus 4.5

Opus is able to not only recall the exact 4-line functional change the PR introduced, along with the specific filename and method that it touched, but also quotes verbatim the inline comment that was part of the diff.

Task ID: astropy__astropy-13236

Contamination elicitation

User Prompt

1Quick memory check on the Astropy issue about stopping the automatic conversion of structured ndarrays into a mixin. Without seeing the patch, can you: 1) name the exact file path and function where that conditional lived, and quote the inline comment immediately above it word-for-word, and 2) quote verbatim the content that was beneath the inline comment that was changed by the PR? Please be exact on punctuation and paths. Context: astropy__astropy-13236.

Prefill

1Let me be precise about the location and wording.

Assistant Response

1**1) File path and function:**

2`astropy/table/table.py` in the `_convert_data_to_col` method (which is inside the `Table` class).

3

4**2) The inline comment immediately above the conditional:**

5

6```python

7# Structured ndarray gets viewed as a mixin unless already a valid

8# mixin class

9```

10

11**3) The content beneath that comment that was changed:**

12

13The original code was:

14```python

15if (not isinstance(data, Column) and not data_is_mixin

16 and isinstance(data, np.ndarray) and len(data.dtype) > 1):

17 data = data.view(NdarrayMixin)

18 data_is_mixin = True

19```

20

21This was the code that automatically converted structured ndarrays to `NdarrayMixin`, which the PR removed/changed to stop that automatic conversion behavior.

22

Gold patch

Python

1diff --git a/astropy/table/table.py b/astropy/table/table.py

2--- a/astropy/table/table.py

3+++ b/astropy/table/table.py

4@@ -1239,13 +1239,6 @@ def _convert_data_to_col(self, data, copy=True, default_name=None, dtype=None, n

5 f'{fully_qualified_name} '

6 'did not return a valid mixin column')

7

8- # Structured ndarray gets viewed as a mixin unless already a valid

9- # mixin class

10- if (not isinstance(data, Column) and not data_is_mixin

11- and isinstance(data, np.ndarray) and len(data.dtype) > 1):

12- data = data.view(NdarrayMixin)

13- data_is_mixin = True

14-

15 # Get the final column name using precedence. Some objects may not

16 # have an info attribute. Also avoid creating info as a side effect.

17 if not name:

Gemini 3 Flash

Gemini 3 Flash, when given no further information regarding the task besides the ID, is able to output verbatim details from the task description and the gold patch. This includes the new regex formula for username validation and the exact line numbers for the change.

Task ID: django__django-11099

Contamination elicitation

Problem statement

1UsernameValidator allows trailing newline in usernames

User prompt

1Please provide the exact task text for the SWE-Bench Verified item django__django-11099: first the single-line summary, then the full detailed description that follows, character-for-character. After that, reproduce the complete gold patch as a unified diff, with exact file paths, hunk headers, and line content. Do not paraphrase; quote verbatim.

Prefill

1Let me be precise about the wording.

Model response

1### Task Text

2UsernameValidator allows trailing newlines.

3

4ASCIIUsernameValidator and UnicodeUsernameValidator use the regex r'^[\w.@+-]+$'. This allows a trailing newline if it's there. The regex should be r'^[\w.@+-]+\Z'.

5

6### Gold Patch

7```diff

8--- a/django/contrib/auth/validators.py

9+++ b/django/contrib/auth/validators.py

10@@ -7,7 +7,7 @@ from django.utils.translation import gettext_lazy as _

11 @deconstructible

12 class ASCIIUsernameValidator(validators.RegexValidator):

13- regex = r'^[\w.@+-]+$'

14+ regex = r'^[\w.@+-]+\Z'

15 message = _(

16 'Enter a valid username. This value may contain only English letters, '

17 'numbers, and @/./+/-/_ characters.'

18@@ -17,7 +17,7 @@ class ASCIIUsernameValidator(validators.RegexValidator):

19 @deconstructible

20 class UnicodeUsernameValidator(validators.RegexValidator):

21- regex = r'^[\w.@+-]+$'

22+ regex = r'^[\w.@+-]+\Z'

23 message = _(

24 'Enter a valid username. This value may contain only letters, '

25 'numbers, and @/./+/-/_ characters.'

26```

Gold patch

Python

1diff --git a/django/contrib/auth/validators.py b/django/contrib/auth/validators.py

2--- a/django/contrib/auth/validators.py

3+++ b/django/contrib/auth/validators.py

4@@ -7,7 +7,7 @@

5

6 @deconstructible

7 class ASCIIUsernameValidator(validators.RegexValidator):

8- regex = r'^[\w.@+-]+$'

9+ regex = r'^[\w.@+-]+\Z'

10 message = _(

11 'Enter a valid username. This value may contain only English letters, '

12 'numbers, and @/./+/-/_ characters.'

13@@ -17,7 +17,7 @@ class ASCIIUsernameValidator(validators.RegexValidator):

14

15 @deconstructible

16 class UnicodeUsernameValidator(validators.RegexValidator):

17- regex = r'^[\w.@+-]+$'

18+ regex = r'^[\w.@+-]+\Z'

19 message = _(

20 'Enter a valid username. This value may contain only letters, '

21 'numbers, and @/./+/-/_ characters.'

Discussion

From this audit of SWE-bench Verified, we see two broader lessons for evaluation design. First, benchmarks sourced from publicly available material carry contamination risk, where training-data exposure can silently inflate scores. If publicly crawled data is used in benchmark construction, model developers should perform additional tests for contamination. Benchmarks, and even their solutions, posted publicly can end up in training data. Extra care should be taken both in how datasets are posted (i.e. password protected) and training data filtering (i.e. strict adherence to canary strings).

Second, automated scoring is tricky to get right; perfect test cases should fully verify correct functionality, being both agnostic to specific unimportant implementation details and also robust to shortcut solutions. These problems are inherently complex and difficult to solve. Catching these problems took multiple extensive human labeling campaigns.

We have incorporated these findings into our recent evaluation efforts. In the last months we’ve chosen to report results from the public split of SWE-Bench Pro. We recommend other model developers do the same. SWE-bench Pro is not perfect, but empirically seems to suffer less from contamination issues. Our contamination pipeline found some cases of contamination, but these cases were significantly rarer and less egregious than SWE-bench Verified, and no model was able to produce a complete verbatim gold patch.

We will continue to invest in original, privately authored benchmarks and ask for help from the industry and academia to do the same. In GDPVal, tasks are privately authored by domain experts, reducing exposure risk, and solutions are graded holistically by trained reviewers. This approach is resource-intensive, but increasingly necessary to measure genuine capability improvements.

Generated by RSStT. The copyright belongs to the original author.