Natusamare Prolapse

⚡ ALL INFORMATION CLICK HERE 👈🏻👈🏻👈🏻

Natusamare Prolapse

Find Us Around the Web!

YouTube

This field is for validation purposes and should be left unchanged.

Reimagining surgical care for a healthier world

Our Mission:

Innovate, educate, and collaborate to improve patient care.

Our Vision:

Reimagining surgical care for a healthier world

The SAGES Education & Research Foundation was created with the vision of a healthcare world in which all operative procedures are accomplished with the least possible physical trauma, discomfort, and loss of productive time for the patient.

The … [Read more...] about Donate to the SAGES Foundation

SAGES Leads Multi-society State of the Art Consensus Conference on Prevention of Bile Duct Injury during Cholecystectomy. Read GSN Article here.

Strategies for Minimizing Bile Duct Injuries: Adopting a Universal Culture of Safety in Cholecystectomy

The Safe Cholecystectomy Didactic Modules are now live!!

Didactic modules can be accessed at … [Read more...] about The SAGES Safe Cholecystectomy Program

Join SAGES now and enjoy the following benefits:

At least 30 hours of Category 1 CME Credit including self assessment CME credits for MOC available annually

Substantial discount for registration to SAGES Annual Meeting*

Cutting-edge education and professional development

A network of over 7,000 colleagues and surgical endoscopic … Read more... about Join SAGES

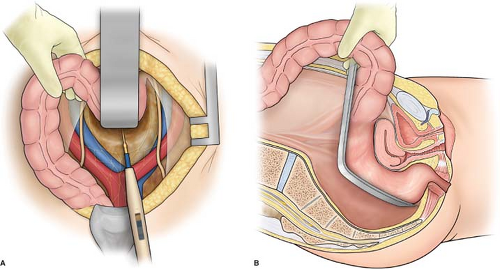

Since the first clinical report in 2009, Transanal Total Mesorectal Excision (taTME), a novel approach in rectal cancer surgery, has been increasingly adopted worldwide. With taTME, the majority of … [Read more...] about Multicenter Phase II Study of Transanal Total Mesorectal Excision (taTME) with Laparoscopic Assistance for Rectal Cancer

Society of American Gastrointestinal and Endoscopic Surgeons

11300 W. Olympic Blvd Suite 600

Los Angeles, CA 90064 USA

webmaster@sages.org

Tel: (310) 437-0544

Copyright © 2021 Society of American Gastrointestinal and Endoscopic Surgeons · Legal

· Managed by BSC Management, Inc

natusamare .tumblr.com - Tumblr - Natusamare Tumblr

SAGES - Reimagining surgical care for a healthier world

madVR - high quality video renderer (GPU assisted) - Doom9's Forum

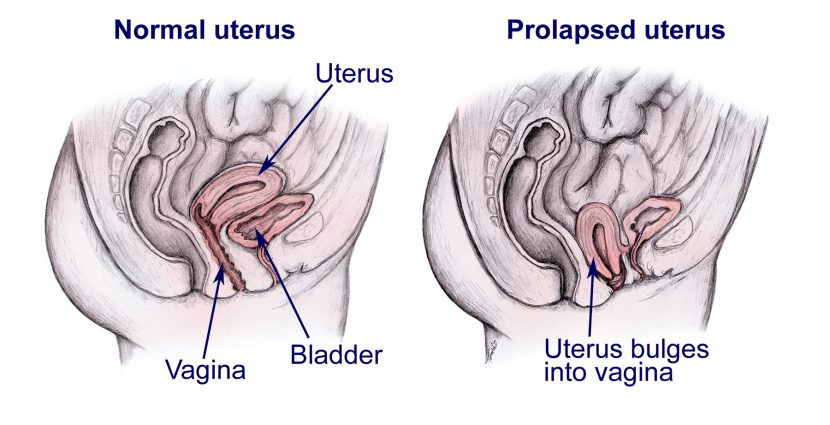

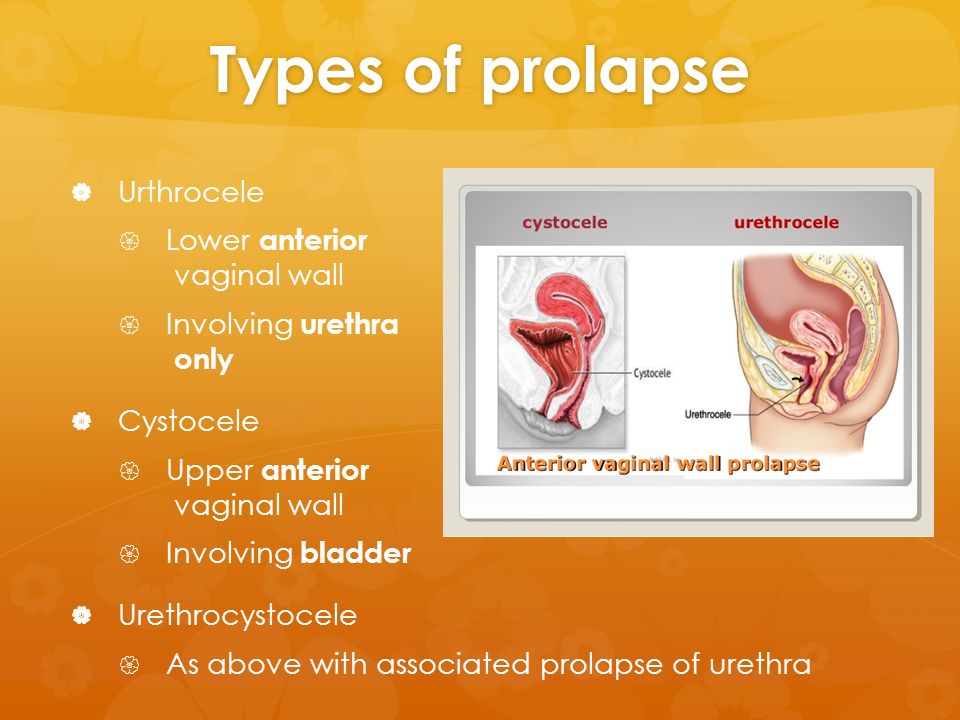

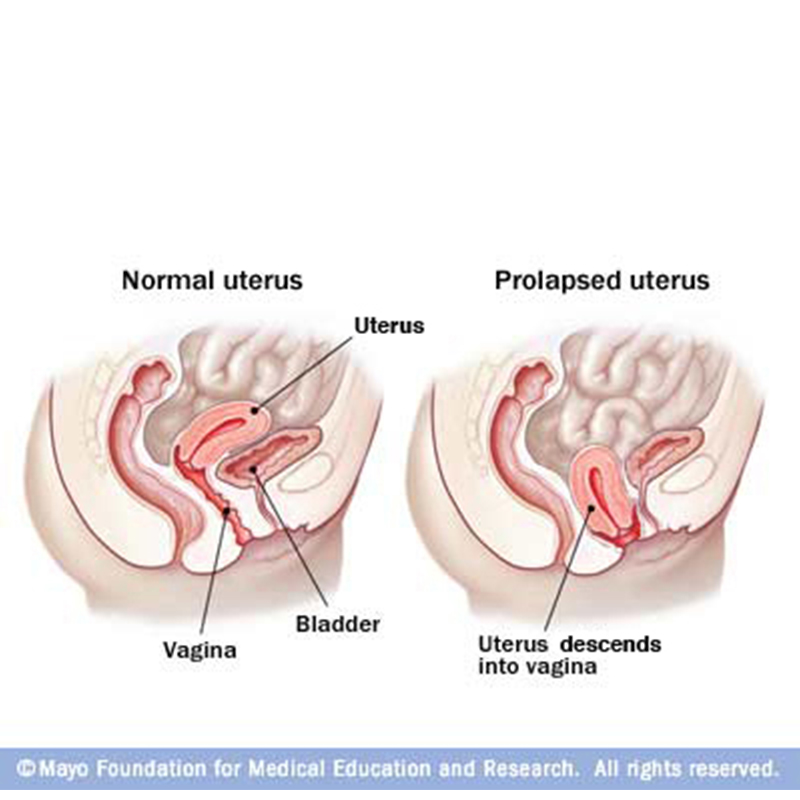

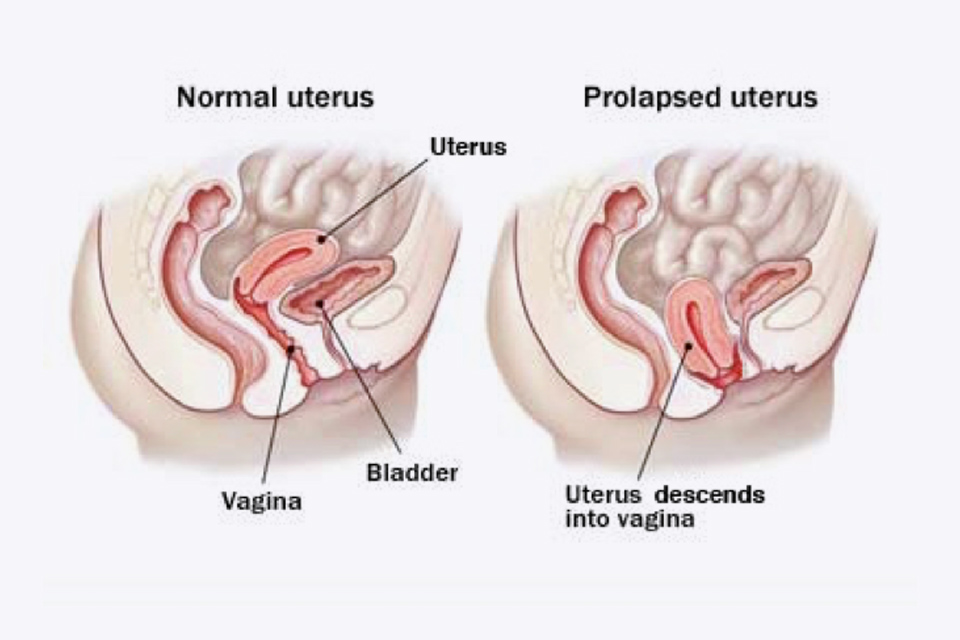

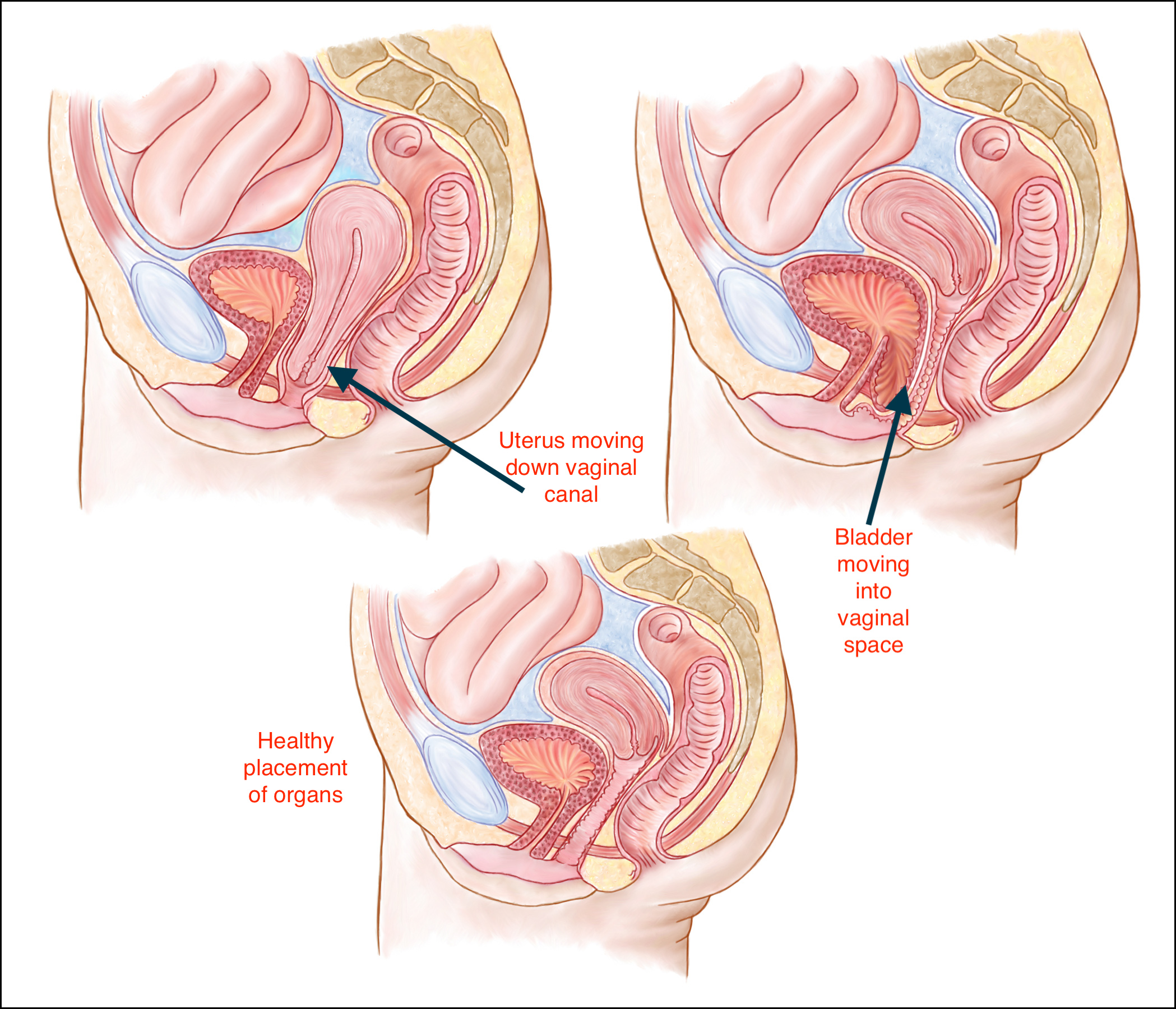

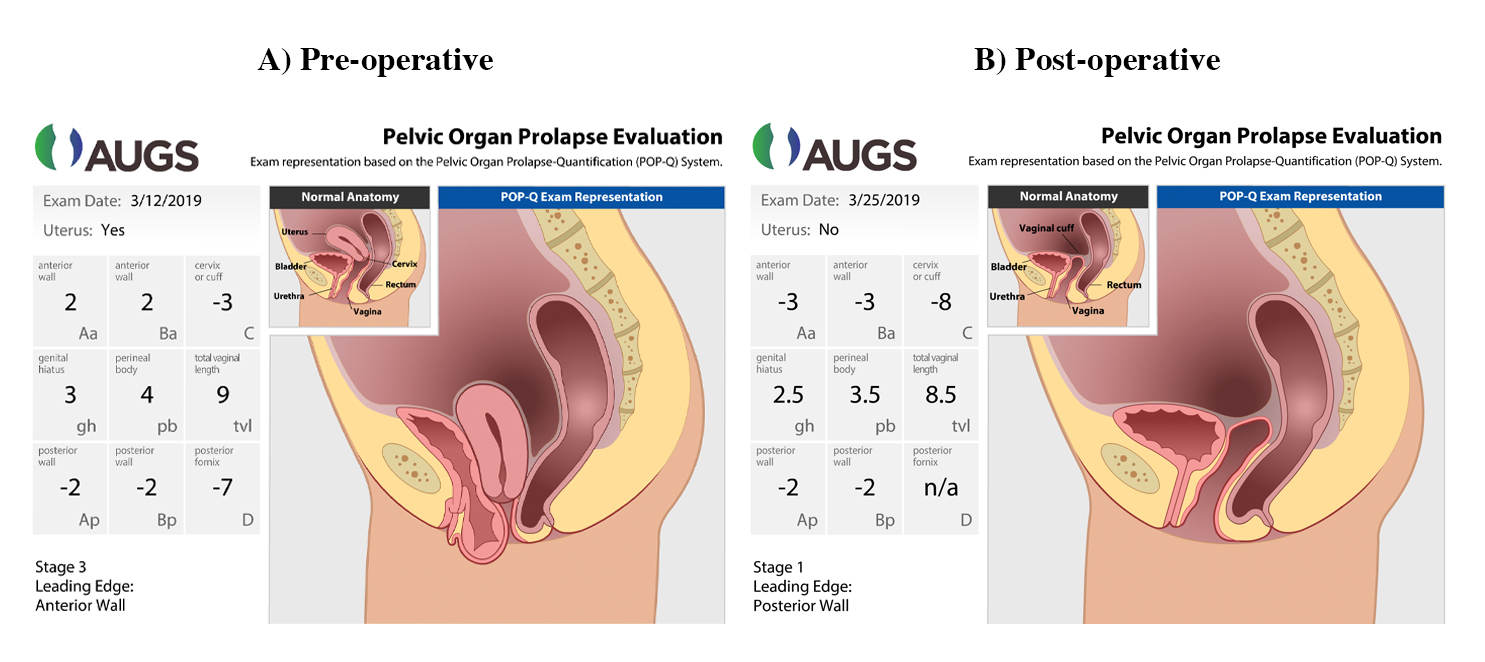

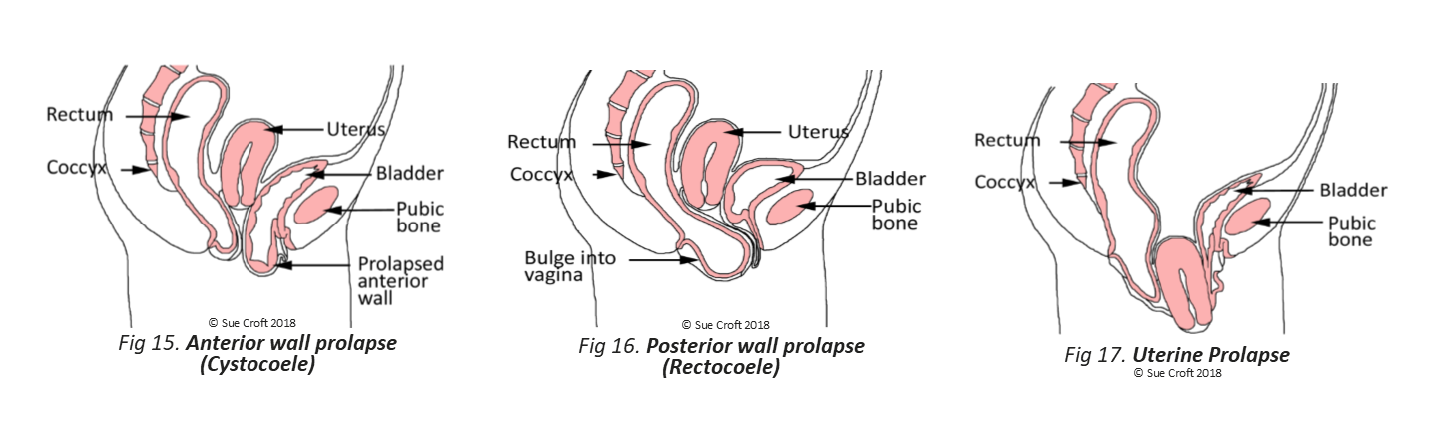

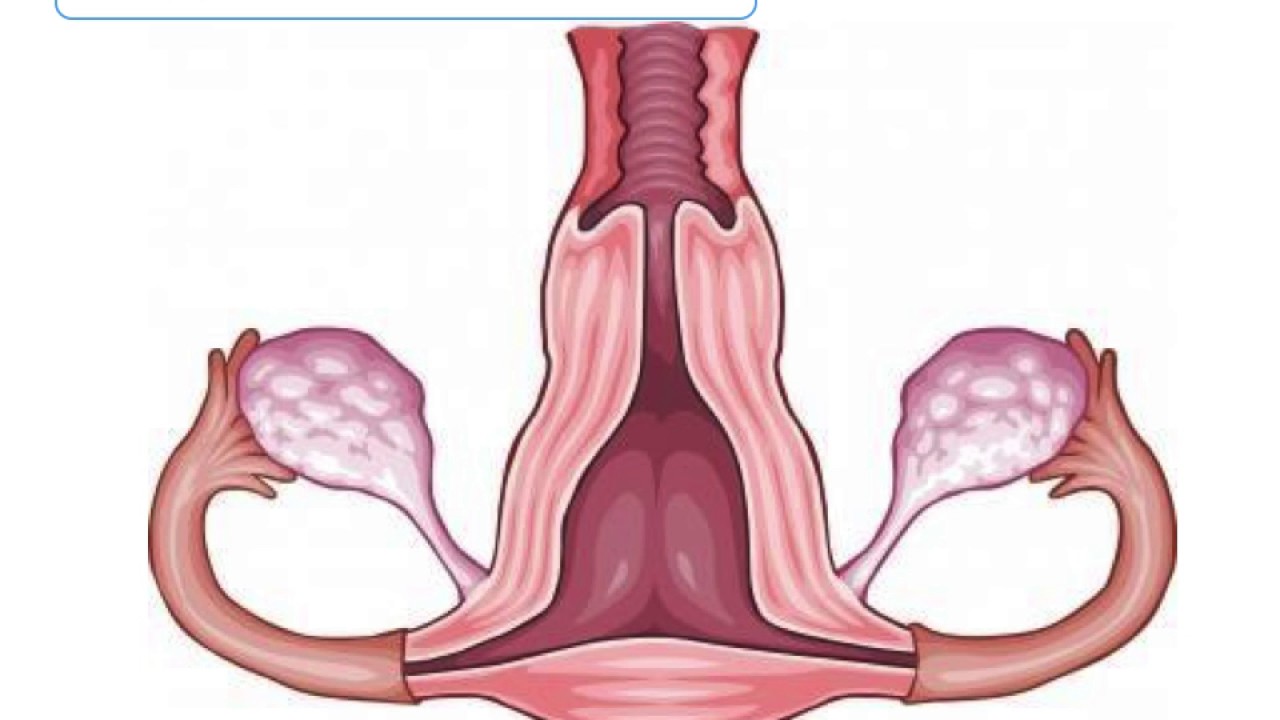

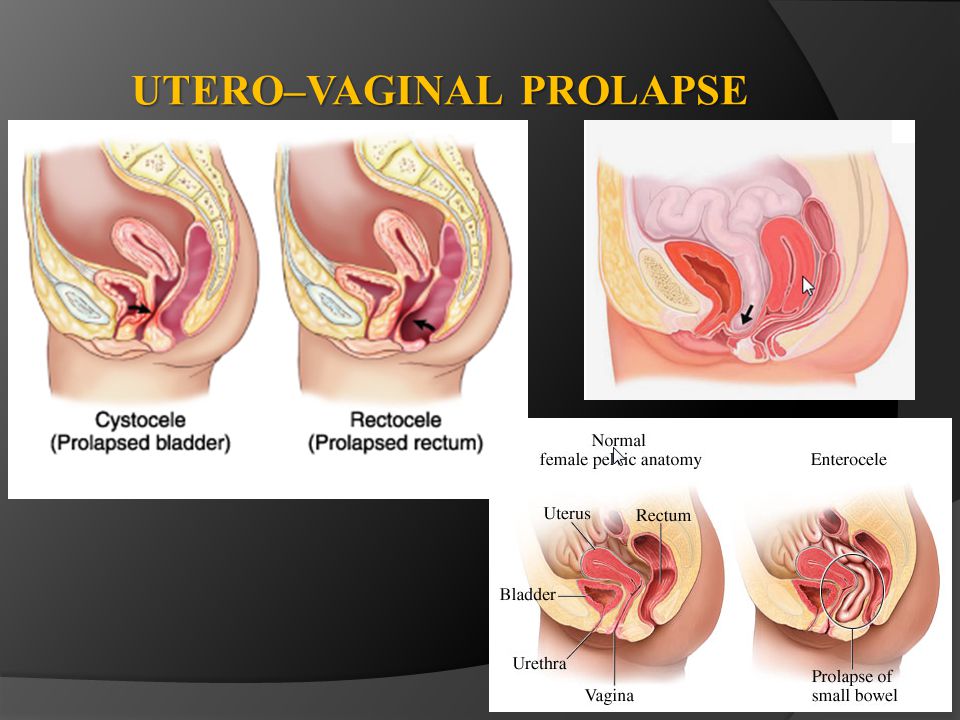

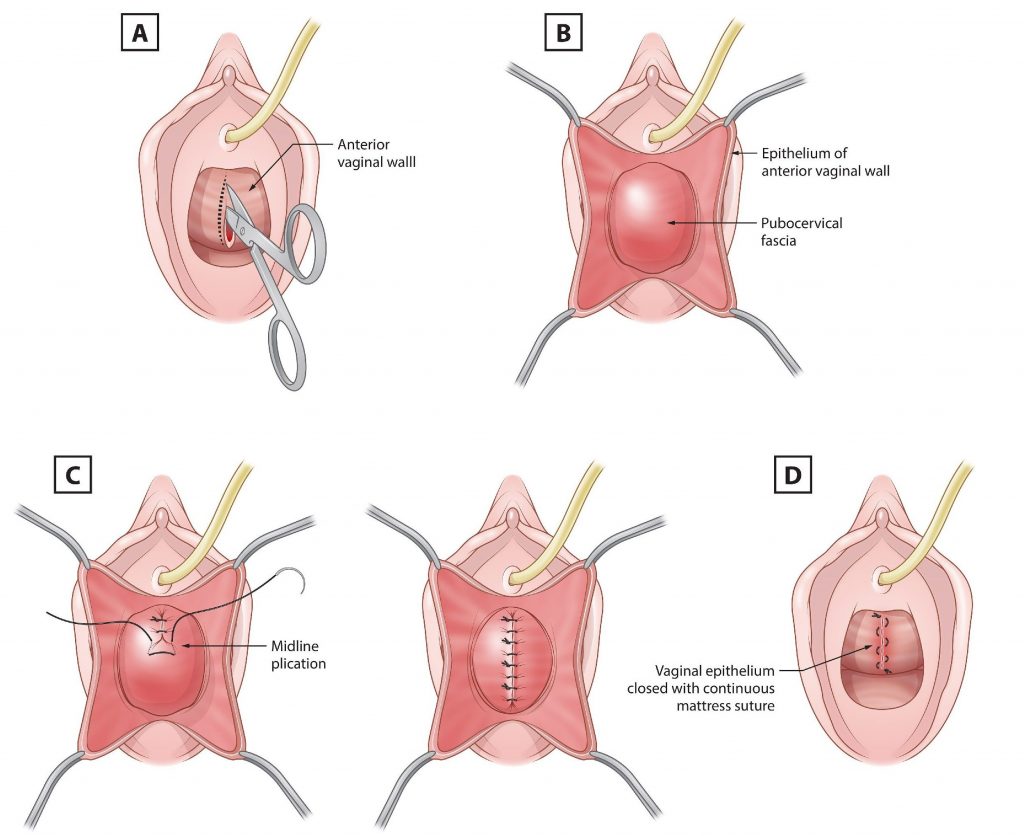

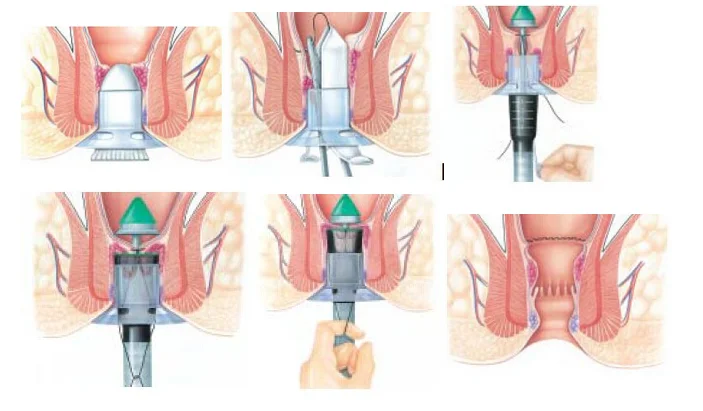

Uterine Prolapse : Risk Factors, Symptoms, and Diagnosis

Medical Xpress - medical research advances and health news

madVR - high quality video renderer (GPU assisted)

Last edited by madshi; 7th February 2019 at 20:09 .

FAQ:

A) Which output format (RGB vs YCbCr, 0-255 vs 16-235) should I activate in my GPU control panel?

Windows internally always "thinks" in RGB 0-255. Windows considers black to be 0 and white to be 255. That applies to the desktop, applications, games and videos. Windows itself never really thinks in terms of YCbCr or 16-235. Windows does know that videos might be YCbCr or 16-235, but still, all rendering is always done at RGB 0-255. (The exception proves the rule.)

So if you switch your GPU control panel to RGB 0-255, the GPU receives RGB 0-255 from Windows, and sends RGB 0-255 to the TV. Consequently, the GPU doesn't have to do any colorspace (RGB -> YCbCr) or range (0-255 -> 16-235) conversions. This is the best setup, because the GPU won't damage our precious pixels.

If you switch your GPU control panel to RGB 16-235, the GPU receives RGB 0-255 from Windows, but you ask the GPU to send 16-235 to the TV. Consequently, the GPU has to stretch the pixel data behind Windows' back in such a way that a black pixel is no longer 0, but now 16. And a white pixel is no longer 255, but now 235. So the pixel data is condensed from 0-255 to 16-235, and all the values between 0-15 and 236-255 are basically unused. Some GPU drivers might do this in high bitdepth with dithering, which may produce acceptable results. But some GPU drivers definitely do this in 8bit without any dithering which will introduce lots of nasty banding artifacts into the image. As a result I cannot recommend this configuration.

If you switch your GPU control panel to YCbCr, the GPU receives RGB from Windows, but you ask the GPU to send YCbCr to the TV. Consequently, the GPU has to convert the RGB pixels behind Windows' back to YCbCr. Some GPU drivers might do this in high bitdepth with dithering, which may produce acceptable results. But some GPU drivers definitely do this in 8bit without any dithering which will introduce lots of nasty banding artifacts into the image. Furthermore, there are various different RGB <-> YCbCr matrixes available. E.g. there's one each for BT.601, BT.709 and BT.2020. Now which of these will the GPU use for the conversion? And which will the TV use to convert back to RGB? If the GPU and the TV use different matrixes, color errors will be introduced. As a result I cannot recommend this configuration.

Summed up: In order to get the best possible image quality, I strongly recommend to set your GPU control panel to RGB Full (0-255).

There's one problem with this approach: If your TV doesn't have an option to switch between 0-255 and 16-235, it may always expect black to be 16 (TVs usually default to 16-235 while computer monitors usually default to 0-255). But we've just told the GPU to output black at 0! That can't work, can it? Actually, it can, surprisingly - but only for video content. You can tell madVR to render to 16-235 instead of 0-255. This way madVR will make sure that black pixels get a pixel value of 16, but the GPU doesn't know about it, so the GPU can't screw image quality up for us. So if your TV absolutely requires to receive black as 16, then still set your GPU control panel to RGB 0-255 and set madVR to 16-235. If your GPU supports 0-255, then set everything (GPU control panel, TV and madVR) to 0-255.

Unfortunately, if you want application and games to have correct black & white levels, too, all the above advice might not work out for you. If your TV doesn't support RGB 0-255, then somebody somewhere has to convert applications and games from 0-255 to 16-235, so your TV displays black & white correctly. madVR can only do this for videos, but madVR can't magically convert applications and games for you. So in this situation you may have no other choice than to set your GPU control panel to RGB 16-235 or to YCbCr. But please be aware of that you might get lower image quality this way, because the GPU will have to convert the pixels behind the back of both Windows and madVR, and GPU drivers often do this in inferior quality.

B) FreeSync / G-SYNC

Games create a virtual world in which the player moves around, and for best playing experience, we want to achieve a very high frame rate and lowest possible latency, without any tearing. As a result with FreeSync/G-SYNC the game simply renders as fast as it can and then throws each rendered frame to the display immediately. This results in very smooth motion, low latency and a very good playability.

Video rendering has completely different requirements. Video was recorded at a very specific frame interval, e.g. 23.976 frames per second. When doing video playback, unlike games, we don't actually render a virtual 3D world. Instead we just send the recorded video frames to the display. Because we cannot actually re-render the video frames in a different 3D world view position, it doesn't make sense to send frames to the display as fast as we can render. The movie would play like fast forward, if we did that! For perfect motion smoothness, we want the display to show each video frame for *EXACTLY* the right amount of time, which is usually 1000 / 24.000 * 1.001 = 41.708333333333333333333333333333 milliseconds.

FreeSync/G-SYNC would help with video rendering only if they had an API which allowed madVR to specify which video frame should be displayed for how long. But this is not what FreeSync/G-SYNC were made for, so such an API probably doesn't exist (I'm not 100% sure about that, though). Video renderers do not want a rendered frame to be displayed immediately. Instead they want the frames to be displayed at a specific point in time in the future, which is the opposite of what FreeSync/G-SYNC were made for.

If you believe that using FreeSync/G-SYNC would be beneficial for video playback, you might be able to convince me to implement support for that by fulfilling the following 2 requirements:

1) Show me an API which allows me to define at which time in the future a specific video frame gets displayed, and for how long exactly.

2) Donate a FreeSync/G-SYNC monitor to me, so that I can actually test a possible implementation. Developing blindly without test hardware doesn't make sense.

Last edited by madshi; 18th September 2017 at 17:10 .

Last edited by madshi; 19th September 2018 at 13:28 .

technical discussion:

I've seen many comments about HDMI 1.3 DeepColor being useless, about 8bit being enough (since even Blu-Ray is only 8bit to start with), about dithering not being worth the effort etc. Is all of that true?

It depends. If a source device (e.g. a Blu-Ray player) decodes the YCbCr source data and then passes it to the TV/projector without any further processing, HDMI 1.3 DeepColor is mostly useless. Not totally, though, because the Blu-Ray data is YCbCr 4:2:0 which HDMI cannot transport (not even HDMI 1.4). We can transport YCbCr 4:2:2 or 4:4:4 via HDMI, so the source device has to upsample the chroma information before it can send the data via HDMI. It can either upsample it in only one direction (then we get 4:2:2) or into both directions (then we get 4:4:4). Now a really good chroma upsampling algorithm outputs a higher bitdepth than what you feed it. So the 8bit source suddenly becomes more than 8bit. Do you still think passing YCbCr in 8bit is good enough? Fortunately even HDMI 1.0 supports sending YCbCr in up to 12bit, as long as you use 4:2:2 and not 4:4:4. So no problem.

But here comes the big problem: Most good video processsing algorithms produce a higher bitdepth than you feed them. So if you actually change the luma (brightness) information or if you even convert the YCbCr data to RGB, the original 8bit YCbCr 4:2:0 mutates into a higher bitdepth data stream. Of course we can still transport that via HDMI 1.0-1.2, but we will have to dumb it down to the max, HDMI 1.0-1.2 supports.

For us HTPC users it's even worse: The graphics cards do not offer any way for us developers to output untouched YCbCr data. Instead we have to use RGB. Ok, e.g. in ATI's control panel with some graphics cards and driver versions you can activate YCbCr output, *but* it's rather obvious that internally the data is converted to RGB first and then later back to YCbCr, which is a usually not a good idea if you care about max image quality. So the only true choice for us HTPC users is to go RGB. But converting YCbCr to RGB increases bitdepth. Not only from 8bit to maybe 9bit or 10bit. Actually YCbCr -> RGB conversion gives us floating point data! And not even HDMI 1.4 can transport that. So we have to convert the data down to some integer bitdepth, e.g. 16bit or 10bit or 8bit. The problem is that doing that means that our precious video data is violated in some way. It loses precision. And that is where dithering comes to rescue. Dithering allows to "simulate" a higher bitdepth than we really have. Using dithering means that we can go down to even 8bit without losing too much precision. However, dithering is not magic, it works by adding noise to the source. So the preserved precision comes at the cost of increased noise. Fortunately thanks to film grain we're not too sensitive to fine image noise. Furthermore the amount of noise added by dithering is so low that the noise itself is not really visible. But the added precision *is* visible, at least in specific test patterns (see image comparisons above).

So does dithering help in real life situations? Does it help with normal movie watching?

Well, that is a good question. I can say for sure that in most movies in most scenes dithering will not make any visible difference. However, I believe that in some scenes in some movies there will be a noticeable difference. Test patterns may exaggerate, but they rarely lie. Furthermore, preserving the maximum possible precision of the original source data is for sure a good thing, so there's not really any good reason to not use dithering.

So what purpose/benefit does HDMI DeepColor have? It will allow us to lower (or even totally eliminate) the amount of dithering noise added without losing any precision. So it's a good thing. But the benefit of DeepColor over using 8bit RGB output with proper dithering will be rather small.

Last edited by madshi; 23rd May 2010 at 09:25 .

Looks like it uses PC levels without any option to expand TV->PC. Other than that, seems to work fine in my extremely brief test. Works fine as a custom renderer in zoomplayer. Quite slow in changing window size and going between fullscreen/windowed mode, but I guess that's to be expected.

(nice job, by the way)

Last edited by TheFluff; 8th April 2009 at 19:33 .

Looks like it uses PC levels without any option to expand TV->PC. Other than that, seems to work fine in my extremely brief test. Works fine as a custom renderer in zoomplayer.

these test patterns look somewhat familiar

awesome project btw! do you plan on adding jitter correction(a la HR) and means to synchronise to the VSYNC fliptime ?

this is the real enemy : http://software.intel.com/en-us/arti...ynchronization

96MB seems quite a lot, why not only 5-6 frames?

hopefully when it'll be mature, James won't mind adding support for it in Reclock

EDIT: oh cool it reads LUT's from yesgrey's software

madshi, have you tested this with neuron2's VC1/AVC CUDA decoder, or coreavc's CUDA? That can get you back some h/w acceleration.

do you plan on adding jitter correction(a la HR) and means to synchronise to the VSYNC fliptime ?

96MB seems quite a lot, why not only 5-6 frames?

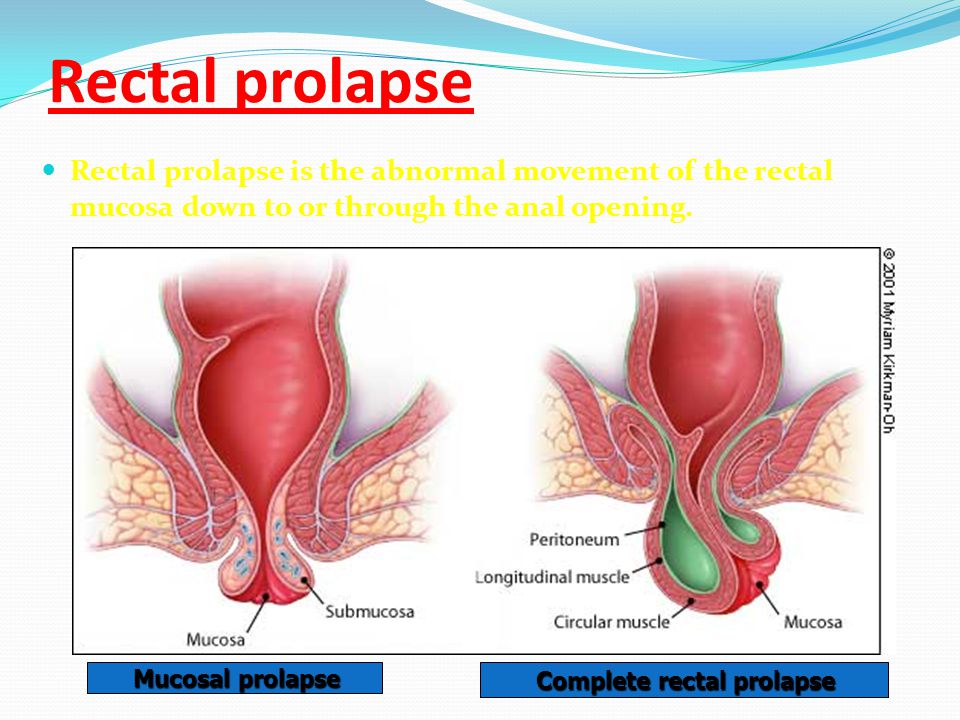

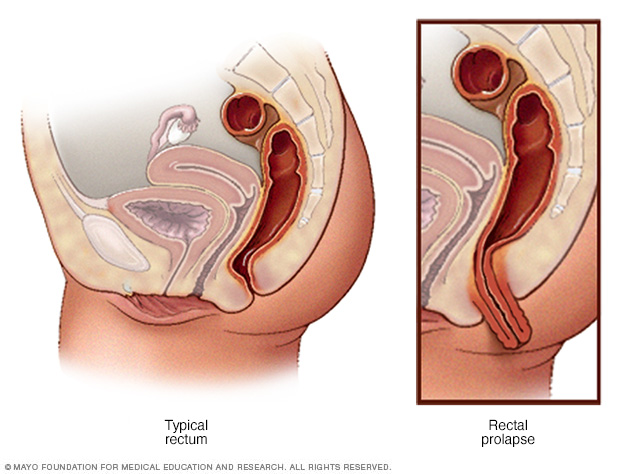

Originally Posted by Rectal Prolapse

madshi, have you tested this with neuron2's VC1/AVC CUDA decoder, or coreavc's CUDA? That can get you back some h/w acceleration.

@madshi

Could you also post screenshot from MPC-HC with enabled shader YV12 Chroma Upscaling (EVR Custom)

@madshi

Could you also post screenshot from MPC-HC with enabled shader YV12 Chroma Upscaling (EVR Custom)

Last edited by leeperry; 8th April 2009 at 22:19 .

Could you also post screenshot from MPC-HC with enabled shader YV12 Chroma Upscaling (EVR Custom)

@leeperry

What does 'EE' stand for?

Edge Enhancement, which often produces ringing (typically white ghost lines next to high contrast edges).

@leeperry

What does 'EE' stand for?

Thanks Madshi

I would be awesome if you integrated it with Reclock (sync to V-sync or something). Smoothness is the most important to me (and no tearing!).

Many thanks Madshi for this new creation!

However, I am not sure I have understood its added value compared to VMR9/EVR for a typical usage, I mean is there any real benefit to use this renderer if no resize is needed for the playback of a 1080p movie on an 1080p screen using HDMI 1.0?

On a side note I have noticed that when a player that uses the renderer (in my case zoomplayer) is in the background the CPU usage rises to 50% even in pause mode.

Here is the link for the new reclock release that already supports madVR, I've requested it to James (reclock's current developer) and he was so nice to add it imediatelly.

http://forum.slysoft.com/showthread.php?t=19931

I just knew madshi was cooking up something, but I suspected he might be the force behind the mysterious SlyPlayer.

This is really amazing work, madshi, thank you very much! It proves once again that when it comes to obsessing about quality nothing beats German engineering. And I'm in total agreement, when we can get the best quality why compromise?

One question: Does madVR actually support 10-bit data paths in GPU drivers?

Is there a list of what apps work with this renderer so far? I'm particulary intereted if anyone has it working with:

- JR Media Center

- Arcsoft TMT V3

Thanks

Nathan

Forum Jump

User Control Panel

Private Messages

Subscriptions

Who's Online

Search Forums

Forums Home

Announcements and Chat

General Discussion

News

Forum / Site Suggestions & Help

General

Decrypting

Newbies

DVD2AVI / DGIndex

Audio encoding

Subtitles

Linux, Mac OS X, & Co

Capturing and Editing Video

Avisynth Usage

Avisynth Development

VapourSynth

Capturing Video

DV

HDTV / DVB / TiVo

NLE - Non Linear Editing

VirtualDub, VDubMod & AviDemux

New and alternative a/v containers

Video Encoding

(Auto) Gordian Knot

MPEG-4 ASP

MPEG-4 Encoder GUIs

MPEG-4 AVC / H.264

High Efficiency Video Coding (HEVC)

New and alternative video codecs

MPEG-2 Encoding

VP9 and AV1

(HD) DVD, Blu-ray & (S)VCD

One click suites for DVD backup and DVD creation

DVD & BD Rebuilder

(HD) DVD & Blu-ray authoring

Advanced authoring

IFO/VOB Editors

DVD burning

Hardware & Software

Software players

Hardware players

PC Hard & Software

Programming and Hacking

Development

Translations

All times are GMT +1. The time now is 22:15 .

Powered by vBulletin® Version 3.8.11 Copyright ©2000 - 2021, vBulletin Solutions Inc.

Welcome to Doom9 's Forum, THE in-place to be for everyone interested in DVD conversion.

Before you start posting please read the forum rules . By posting to this forum you agree to abide by the rules.

madVR - high quality video renderer (GPU assisted)

c_zoom" width="550" alt="Natusamare Prolapse" title="Natusamare Prolapse">w_640/images/20180530/5c18bbb6c58d4426b31634ae6288f83c.jpeg" width="550" alt="Natusamare Prolapse" title="Natusamare Prolapse">

c_zoom" width="550" alt="Natusamare Prolapse" title="Natusamare Prolapse">w_640/images/20180530/5c18bbb6c58d4426b31634ae6288f83c.jpeg" width="550" alt="Natusamare Prolapse" title="Natusamare Prolapse">